Real Users, & Real Feedback, Before the Feature Exists

The most expensive mistake in product development is building something nobody wants. The second most expensive is building the right thing the wrong way. Both mistakes share the same root cause: skipping real-world feedback in favor of assumptions.

There's a way around this. Claude's ecosystem — skills, projects, and artifacts — gives you a rapid prototyping platform that puts your core feature in front of real users in days, not months. No infrastructure to build. No deployment pipeline to configure. Just a working feature that people use for real tasks, generating feedback that shapes every product decision before you commit engineering resources.

I've been using this approach to validate AI-generated job application documents — tailored resumes and cover letters with fit scoring — while the feature is still months out on my web application's roadmap. Real users are generating real documents, submitting them to real job applications, and telling me what works and what doesn't. Here's how the methodology works, and why any product builder can use it.

The Claude.Ai Ecosystem as Prototyping Toolkit

Claude's ecosystem offers three distinct prototyping channels, each reaching a different user segment while testing the same core value proposition.

- Claude Code skills turn a full feature into a slash command. Technical users get the complete workflow — fit assessment, experience matching, document generation — in a single terminal command. No infrastructure, no deployment, no accounts. If you can express your feature as a skill, you can ship it to power users today.

- Claude Projects (webchat) put the same feature on the free tier. Users upload their resume, paste a job description, and get tailored documents in a conversational flow. No app to download, no install — just a Claude.ai account. This is how you reach non-technical users immediately.

- Claude Artifacts create visual, interactive prototypes that feel like product UI. Requirement-by-requirement scoring, gap analysis in a scannable layout, data persisting in browser storage. This is where you test design assumptions without building a frontend.

The key architectural insight: all three interfaces share the same fit assessment framework, style presets, and document generation engine. Improvements to the shared components roll out to every interface simultaneously.

Why This Feature Couldn't Wait

I'm building a career development platform. Job application documents — tailored cover letters and resumes with fit scoring — are months out on the roadmap, behind skill profiling, training integrations, and infrastructure. If I build sequentially, I won't put documents in front of real users until after months of investment. By then, I still won't know the answers to the questions that determine whether this product succeeds:

- Do people value the service?

Will they use AI-generated documents for real applications? - Do they trust the output?

Are they confident enough to submit what the AI produces? - Do the formats meet expectations?

Are the cover letter and resume structures what hiring managers expect? - Do the documents get results?

Do customized applications outperform what people create on their own? - Are the resumes ATS-compatible?

Is the format optimized for Applicant Tracking Systems' automated parsing — the software that pre-populates application fields from uploaded resumes?

Those questions can't wait. The investment in everything else on the roadmap only makes sense if the core value proposition holds up with real users.

What Real Users Told Us

"That was easy and the resume is slick." "It's sooo much easier than manual customization..." "This is amazing, I'm going to tell EVERYONE about this."

These reactions validate the core proposition. But the most useful feedback came when users got specific:

"...writing a cover letter for a more creative role needs to show more personality, whereas that might be frowned upon from a more technical job."

This directly validated the style preset system and told us the presets need more nuance — the spectrum between "professional" and "creative" has more gradations than three buckets can capture.

"I don't think more templates would help. Sometimes the more simple and easy for the company's platform to understand and translate the better. It's no longer about getting a human's attention. You have to get past the AI software before a human even looks at it."

This is exactly the kind of insight you only get from people navigating the job market right now. It reframed the entire document generation strategy around machine readability first, human appeal second.

Then the cover letter feedback pushed in the opposite direction:

"The cover letter should be a letter and feel like it, not a memo. It's worth it to write like a human with some humanity :)"

This tension is the whole product challenge in one feedback loop: resumes need to be machine-optimized for ATS parsing, but cover letters need to feel human — warm, narrative, personal. That's not something I would have designed correctly from assumptions alone.

When Users Found a Real Bug

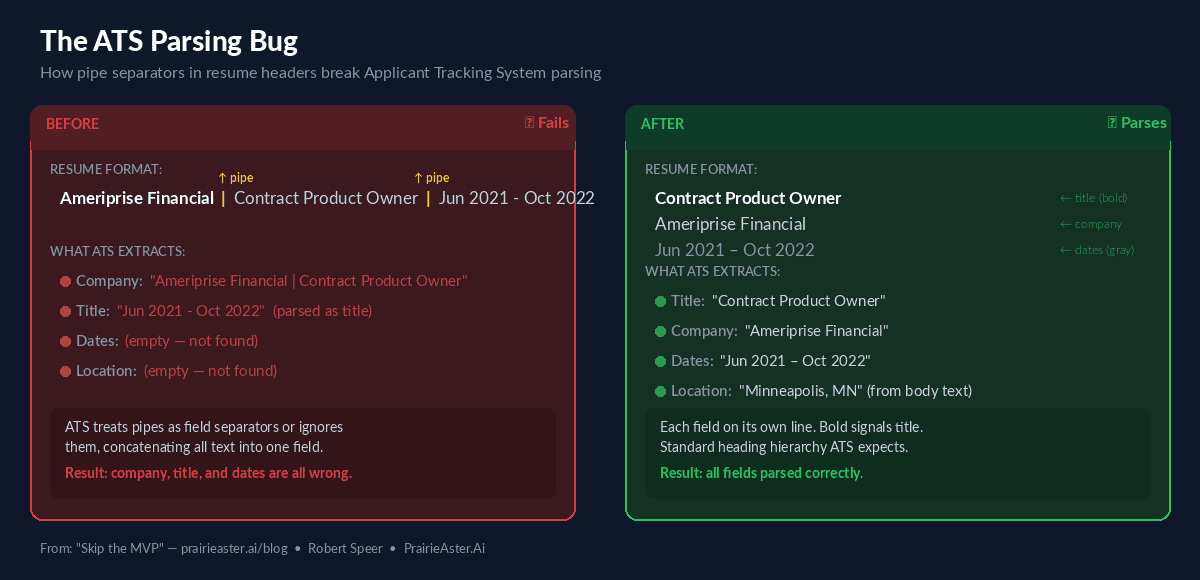

The most concrete feedback-to-fix cycle came from ATS testing. Users reported that the "fill from resume" feature in applicant tracking systems wasn't parsing employer names correctly. The root cause: company names and dates were combined on one line with a pipe separator — a format that looks clean to humans but that ATS parsers can't reliably tokenize.

The fix: separate employer information into three distinct lines (title, company, dates), add semantic heading styles that ATS systems recognize, and include location fields that parsers expect. Because the generation engine is shared across all three interfaces, the fix shipped to every user simultaneously.

Timeline from user report to deployed fix: days. No sprint planning, no release train, no deployment pipeline. A user hit a real problem with a real application, and every interface had the fix before the next person applied.

From Prototype Feedback to Product Decisions

Every piece of feedback maps directly to a product decision in the web application:

- Review-before-generation is mandatory. Every interface where users see the fit assessment before document generation produces better outcomes. The web app will make this a first-class workflow step.

- ATS optimization over visual design. Users told us that getting past automated parsing matters more than impressing a human reader. This reframed the entire resume generation strategy around machine readability.

- Dual-tone generation. Cover letters need warmth and narrative; resumes need clean structure for machines. The same engine must produce both, with fundamentally different goals, from the same input.

- Profile persistence moved from nice-to-have to critical path. Users expect the system to remember their experience across sessions. This reshuffled the roadmap.

The Broader Takeaway

The traditional MVP still means weeks of infrastructure — authentication, hosting, databases, deployment — before users see the core feature. For an early-stage product with unvalidated assumptions, that's a significant bet.

Claude's ecosystem skips the infrastructure layer entirely for validation. The total investment across all three interfaces was days, not months. And the feedback quality is higher than any survey or user interview could produce — because people are using the tool for real applications with real stakes. Nobody fills out a survey with the same care they put into a job application they're actually submitting.

The methodology is reusable: any product feature that can be expressed as a skill, a project workflow, or an interactive artifact can be validated this way before committing engineering resources. If your feature solves a real problem, give it to real users in the environment where they already work. The feedback will be better than anything you can get from a prototype behind a login wall.

Try it yourself: The interactive artifact generates tailored resumes and cover letters directly in your browser, and all implementations are open source at PrairieAster-Ai/claude-code-skills.

If you're building AI features and want to discuss prototyping across interfaces, reach out on LinkedIn or book time.